Nikan Doosti

Man can do what he wills but he cannot will what he wills. — Arthur Schopenhauer

Through my research, I like to produce practical and accessible tools to be used by scientists, content creators, engineers, and end-users as a connecting media between different domains. I plan to do this by devising self-supervised physics-aware deep learning method. I am interested in applications of AI (mostly deep learning) in computer graphics, science, and generally digital twin. Currently, I am looking for an unique PhD opportunity and I plan to continue my research career in industry!

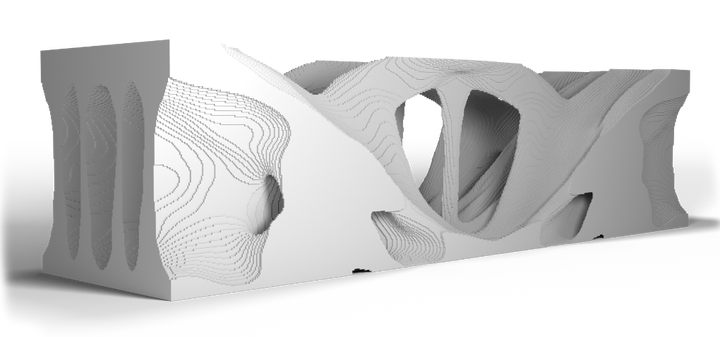

I am a MSc Computer Engineering graduate from Iran University of Science and Technology (IUST) and I am working as a machine learning engineer. Previously, I did a research internship in Artificial Intelligence aided Design and Manufacturing (AIDAM) group at Max Planck Institut für Informatik (MPI-INF). I worked on Structural Topology Optimization (TO) via Deep Learning (DL) under supervision of Dr. Vahid Babaei (AIDAM group leader) and in collaboration with Prof. Julian Panetta (Assistant Professor at University of California, Davis). We published this work in ACM Symposium on Computational Fabrication. This opportunity allowed me to realize the importance and applicability of AI in conjunction with science, and interdisciplinary work. Prior to that, I was mainly focused on classic digital image processing and data driven deep computer vision, and data science. In the past of couple of years I grew interest in applications of AI in medicine and computational neuroscience but I was not lucky enough to professionally work on it.

In my spare time, I play video games, read about history, and I like comedy (standup). On top of that, I find the idea and practice of open source amazing and I use Github as my main social media app.

news

| Oct 23, 2022 | I defended my master’s thesis with full grade at Iran University of Science and Technology. |

|---|---|

| Apr 24, 2022 | I joined Nahal Gasht as a full-time machine learning engineer. |

| Mar 04, 2022 | I gave a talk at Toronto Geometry Colloquium about my SCF21 publication. |

| Oct 01, 2021 | We published and presented a peer-reviewed paper in SCF21 conference! |

latest posts

| Jan 01, 2024 | An empty post as the template |

|---|